AI Content Transparency

Established Meta's AI transparency system, driving compliance, improving user sentiment, and accelerating AI launches.

- Company

- Meta

- Year

- 2024

- Role

- Design Lead, team of 12 across Facebook, Instagram, Threads. Partnered with policy, legal, and comms. Drove alignment with VPs and C-level.

01

Problem

Problem

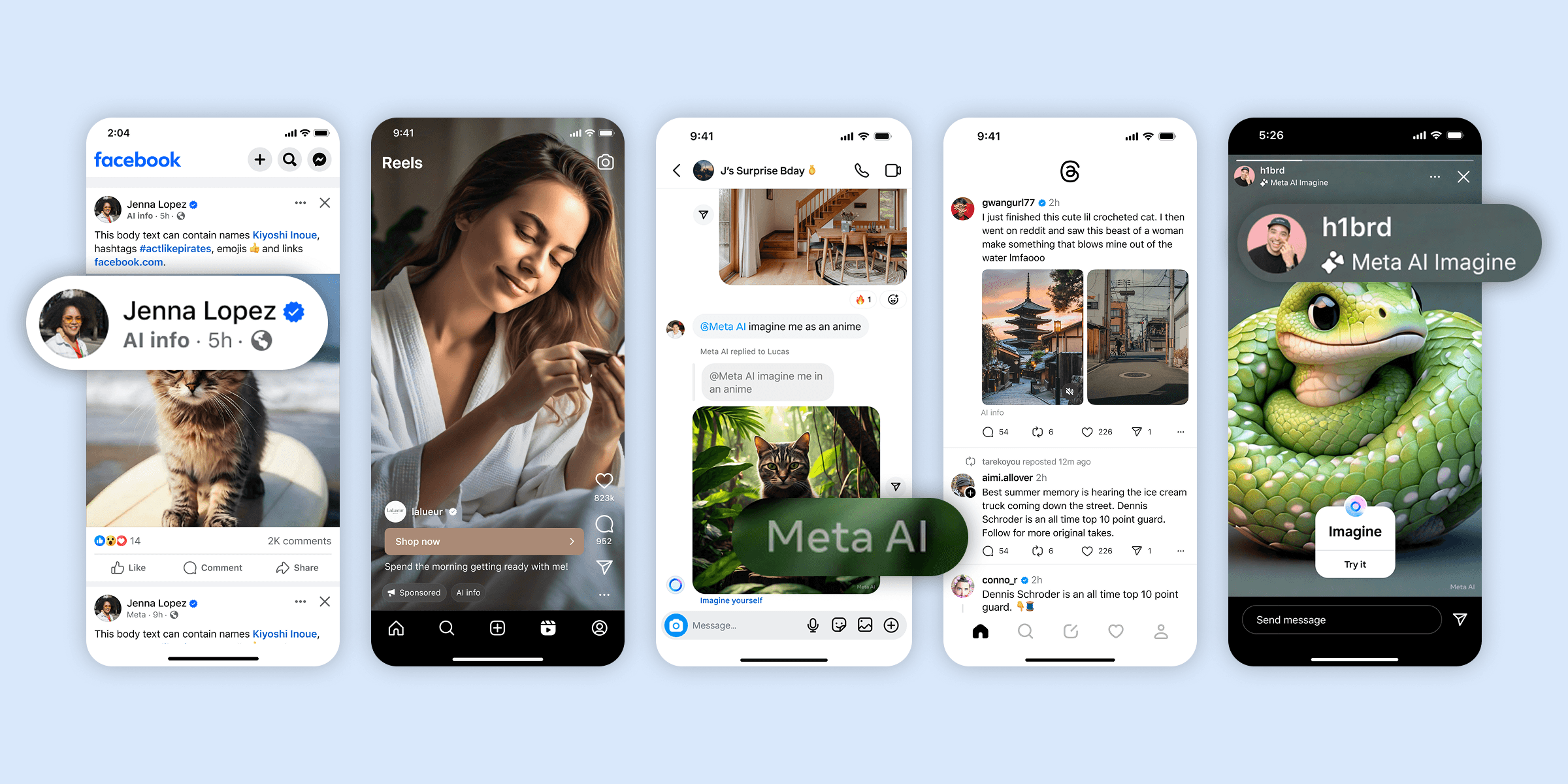

As AI-generated content becomes more prevalent, ensuring transparency is critical for building user trust—especially on social platforms like Facebook, Instagram, and Threads, where millions of creators generate and share content daily. When I took on this project, existing transparency practices were partially in place, but a deeper investigation—through UX research and experience audits—revealed significant gaps in how AI-generated content was communicated to users.

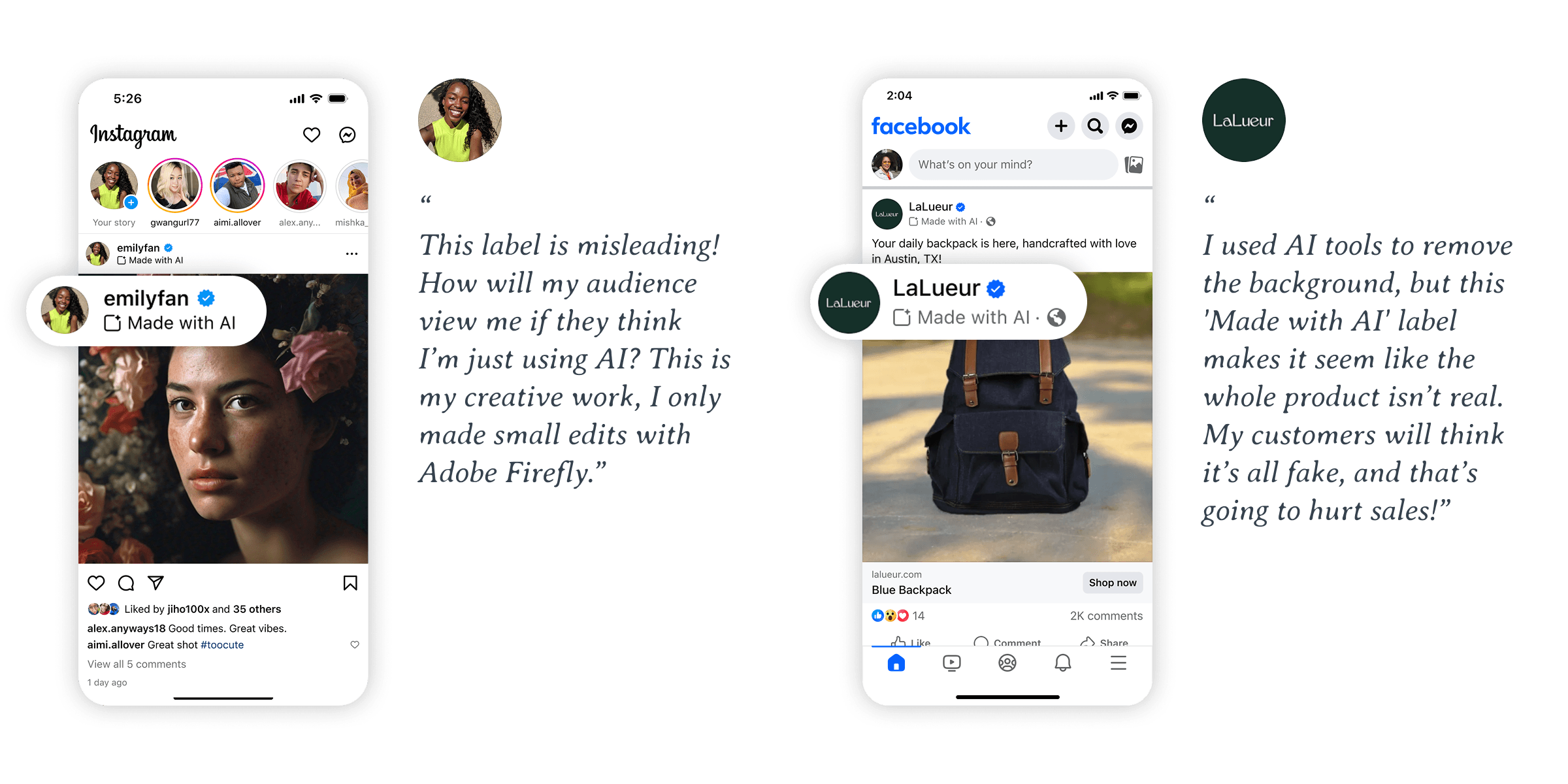

Problem #1: Negative user sentiment toward existing AI transparency attribution designs.

In early 2024, Meta launched the "Made with AI" transparency label for third-party content detected to be AI-empowered, but quickly faced complaints from creators and advertisers. Creators reported the label was incorrectly applied to images with minor edits, not AI-generated content. Advertisers raised concerns about consumer trust, fearing the label could make users question the authenticity of the products they were seeing, undermining brand credibility.

Problem #2: Conflicting surface UI elements.

Transparency indicators, such as watermarks and attribution labels, sometimes conflict with UI components like tags and CTA buttons, creating usability issues.

Problem #3: Lack of transparency framework and guidance for existing and new AI features.

Without clear guidelines and a design component library, and without design involvement in line with evolving industry and regulatory expectations, we observed inconsistent design approaches across surfaces. Additionally, each new AI product required extensive design efforts, further increasing the risk of inconsistency.

02

Goal

Goal

After defining the key problems to solve and aligning with senior leadership on expectations, we established the primary goal of the project.

How might we implement AI transparency attribution in a way that meets regulatory requirements while minimizing negative impact on user experience, user trust, and brand integrity?

Since this project is horizontal and impacts all AI experiences across Facebook, Instagram, WhatsApp, and Threads, we structured a six-month plan with the following sub-projects to drive progress.

#1: Mitigate negative impact.

Position AI transparency attribution as a mark of authenticity and quality, rather than a warning label.

#2: Streamline and enhance UI components.

Resolve conflicts between transparency indicators and core UI elements to ensure a seamless user experience. Refine the design language to make AI transparency attribution less intrusive.

#3: Establish design framework.

Establish a standardized AI transparency framework and component library to ensure consistency, reduce fragmentation, and minimize redesign efforts across teams.

03

Design

Design

Deep dive #1: Update the "Made with AI" label to "AI Info" and simplify the design language.

We evaluated both the content string and the icon separately.

The "Made with AI" content string misleads users.

The "Made with AI" label is applied to any content where AI involvement is detected, whether fully AI-generated or partially edited with AI. User research revealed that most users associate the label with fully AI-generated content, which is true in less than 10% of cases (based on data pulled in May 2024).

Moreover, the content signals used for labeling are unreliable due to third-party tool limitations and metadata manipulation risks.

We explored alternatives such as "AI info", "AI", "AI-edited and AI-generated", and "Made with AI and Edited with AI". "AI info" is a neutral, clear label that provides relevant AI information, builds user trust, and remains flexible for future scalability without added complexity.

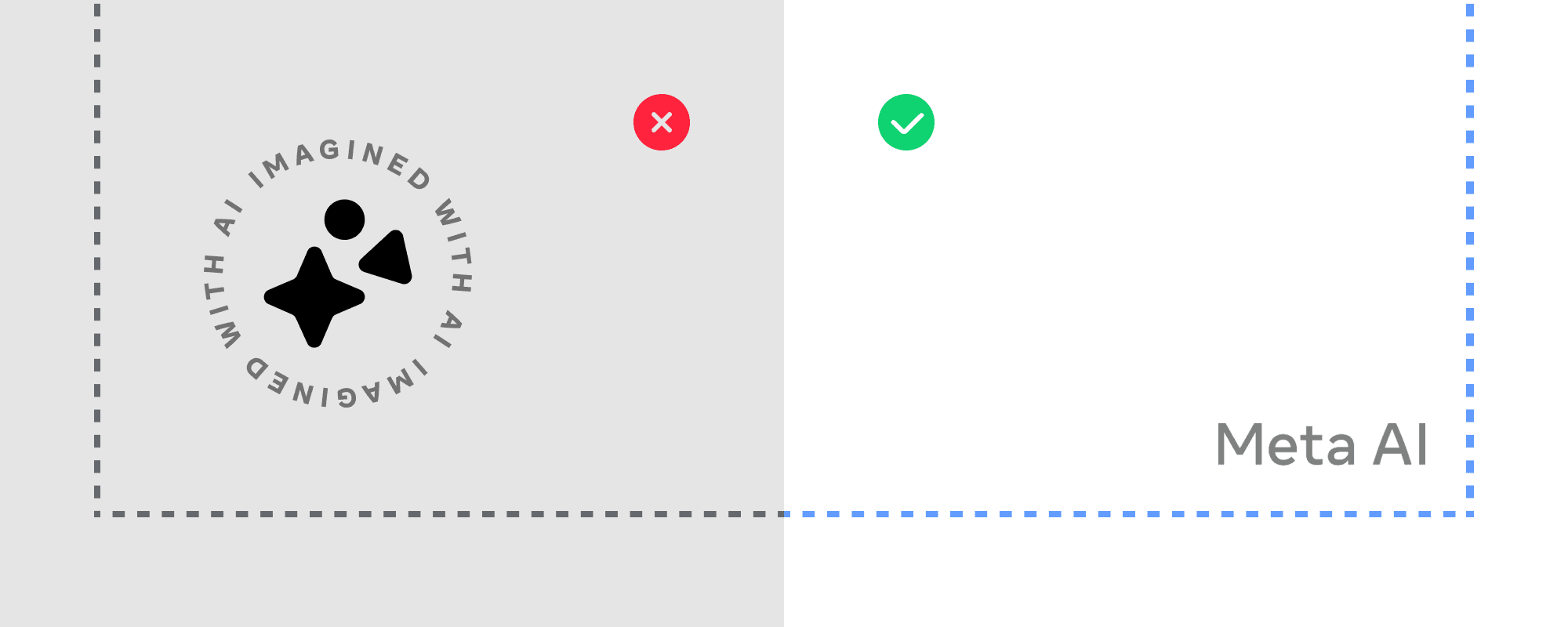

Icon clutters the user interface without adding value.

The original intention behind the icon was to signify AI-generated content and spark excitement. However, user research showed that the icon did not have this effect. Testing various versions, including ones with and without icons and additional icon designs, showed neutral results. Given the findings, we concluded that less is more and decided to remove the icon entirely to reduce clutter and improve the overall user experience.

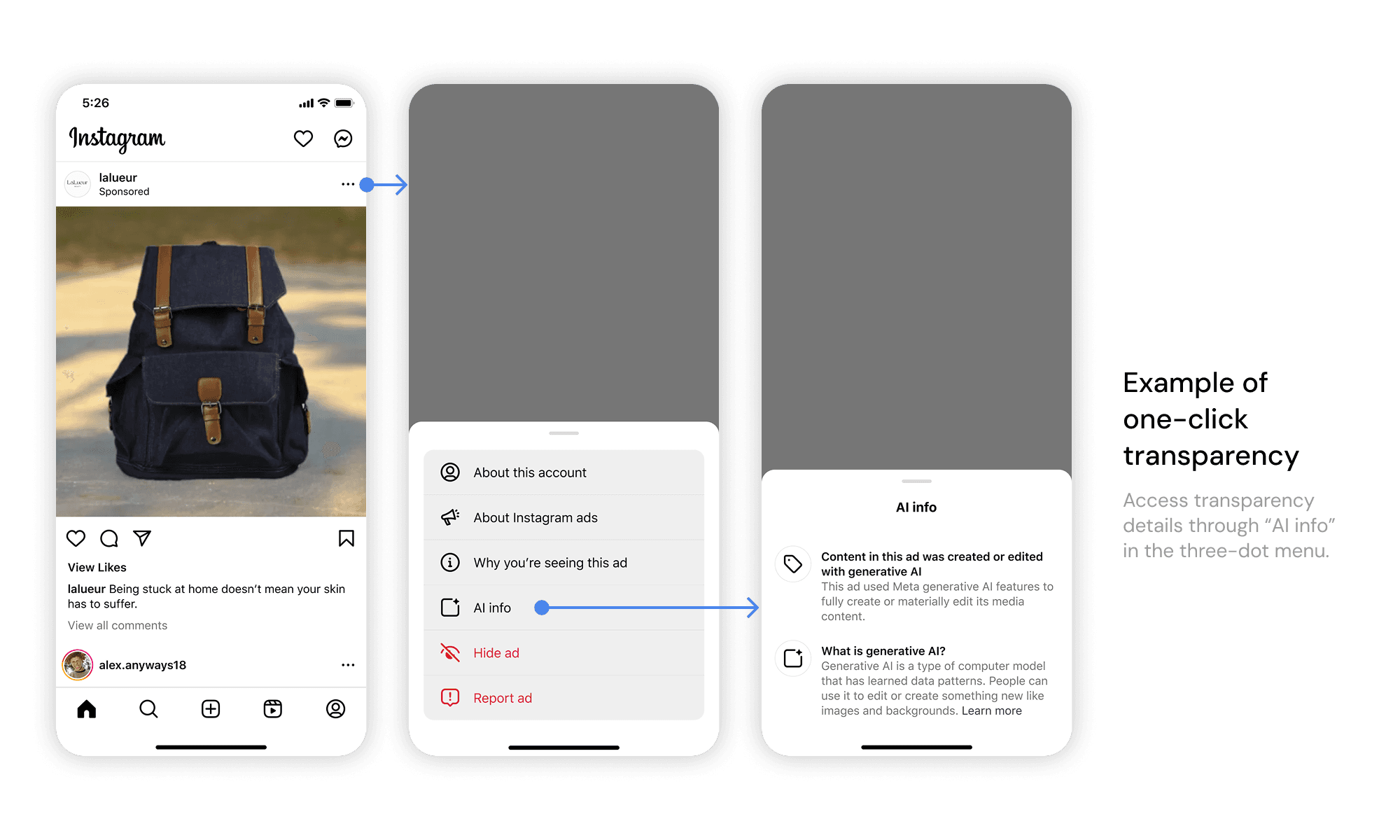

Deep dive #2: Implement a tiered transparency system for AI content attribution.

Not all AI features require the same transparency approach, so we collaborated with policy teams and regional policymakers to define the requirements. A tiered transparency system ensures that some AI content receives a visible label (zero-click transparency), while other types have AI information available in the three-dot menu (one-click transparency). This approach reduces label fatigue while still providing users with access to essential AI details.

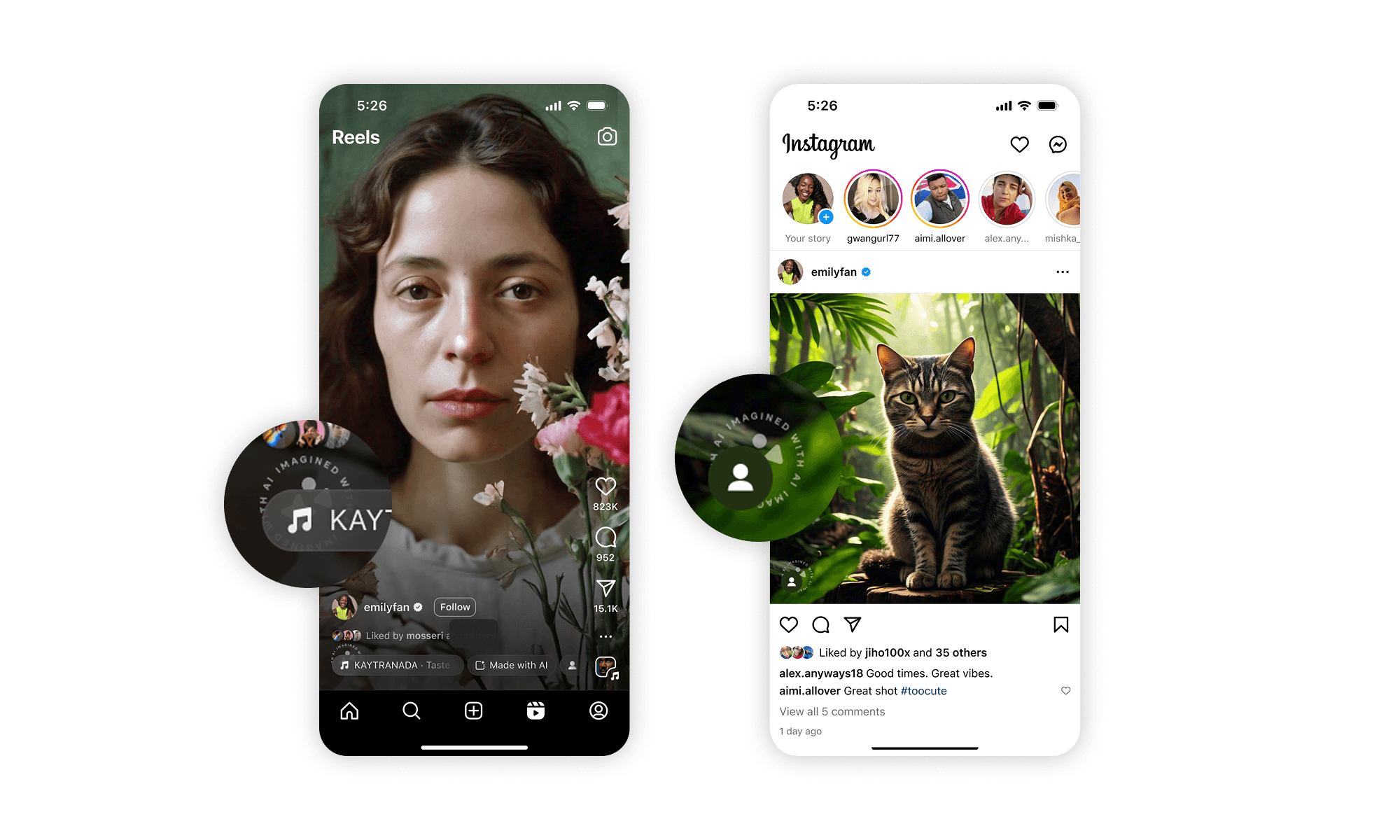

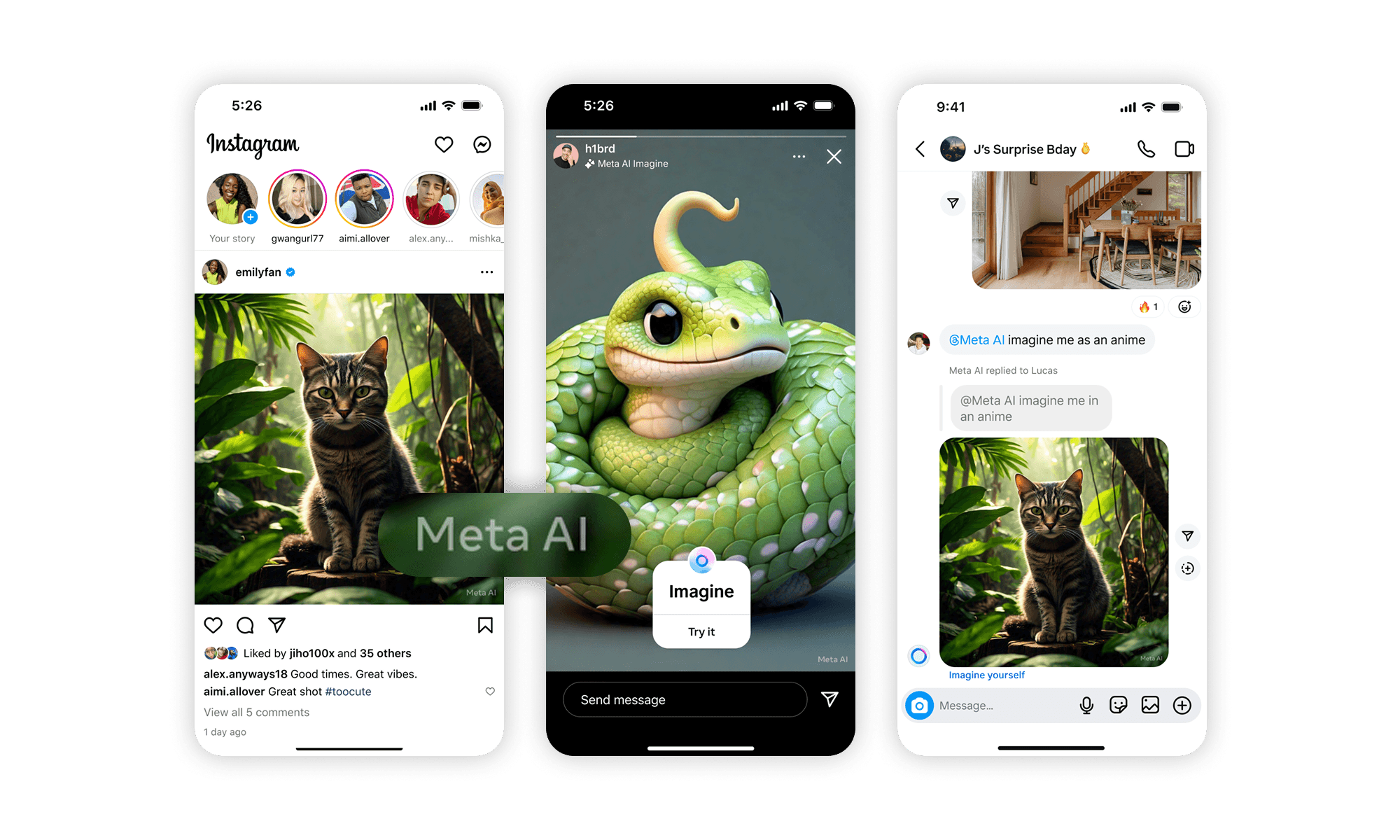

Deep dive #3: Redesign the visible watermark to be less intrusive and seamlessly integrate with core UI elements.

We worked on watermark placement and icon design in parallel, anchoring our design explorations around a few principles:

- Positive attribution: The watermark should attribute positively to Meta AI brand.

- Non-intrusive placement: The watermark should integrate smoothly into the UI without distracting from content.

- Clear but subtle signaling: The watermark should be noticeable without overwhelming the visual experience.

We redesigned the watermark with optimized size and placement to ensure AI content attribution is clear, non-intrusive, and positively associated with the Meta AI brand. Key updates include:

- Simplified the watermark design to reflect the Meta AI brand.

- Moved it from the bottom left—where core UI elements are clustered—to the bottom right for better visual balance.

- Decreased watermark size and margin to create a more seamless user experience.

Deep dive #4: Establishing a cross-functional design framework.

To streamline transparency and attribution for GenAI content across teams and guide both existing and new AI features, I led a cross-functional team of product and content designers from Facebook, Instagram, and Ads. Together, we developed a centralized transparency framework and built a UI component library. This collective effort ensures design consistency, reduces user experience fragmentation, and minimizes future design efforts across teams.

04

Result

Result

Result #1: Improved user sentiment.

Since the rollout of the "AI info" label, replacing "Made with AI," we observed user sentiment shift from negative to neutral across both creators and consumers within two weeks, and it has remained neutral since then.

Result #2: Neutral ads revenue impact.

Ads revenue is critical to Meta, and for each transparency launch and update, we closely monitor topline metrics. Since June 2024, we've seen no negative impact on ad revenue. Advertisers have positively engaged with AI tools and features, with no major concerns raised.

Result #3: Less design time and accelerated AI feature launches.

Since the introduction of the transparency playbook, we've enabled the launch of 6 new AI features requiring content transparency. These launches were completed within a few days of work, significantly reducing the design sprint timelines from weeks to days.

05

Next

Next

#1 Embrace changes.

This work marks the beginning, not the end. The AI landscape, along with regulatory requirements, is rapidly evolving. We will continue collaborating closely with strategic partners to keep the transparency framework up to date and regularly maintain and update the playbook.

#2 Build in governance process.

Moving forward, we will establish standard governance to streamline the process for future AI feature launches, ensuring consistency across teams and reducing fragmentation.

#3 Never skip UXRs for risky launches.

The initial "Made with AI" launch lacked proper UX research pre-launch, a major oversight. Going forward, we will ensure that all transparency initiatives are informed by user research from the start to avoid such mistakes and improve the user experience.

#4 Explore product growth opportunities.

There are great opportunities to spark excitement around AI features by leveraging AI transparency attribution. As AI becomes more integral to content, we could make transparency more inspiring and engaging, celebrating innovation while building user trust.